14 minutes read

Buy or Build your Life Sciences AI Sales Solutions?

About the Author

Richard Freeman holds an MEng in Computer Systems Engineering and a PhD in Artificial Intelligence from the University of Manchester. With over 22 years of experience in scalable big data, cloud computing, and advanced data science, he has worked with Fortune Global 500 companies and led high-impact initiatives—including Innovate UK-funded COVID-19 research and other high-risk, high-reward AI innovation projects.

In today’s rapidly evolving Life Sciences industry, harnessing Generative AI to streamline and optimize sales opportunities has moved from futuristic vision to practical necessity to remain competitive – in the next 12 months 49% of healthcare companies are focusing on Generative AI1. Overall Generative AI in procurement is projected to grow to $2,260 million by 2032 with a CAGR) of 33%2.

Yet, despite widespread consensus on AI’s potential, organizations continually face the pivotal dilemma: should they buy an existing, tried-and-tested AI solution or build their own AI solution from the ground up?

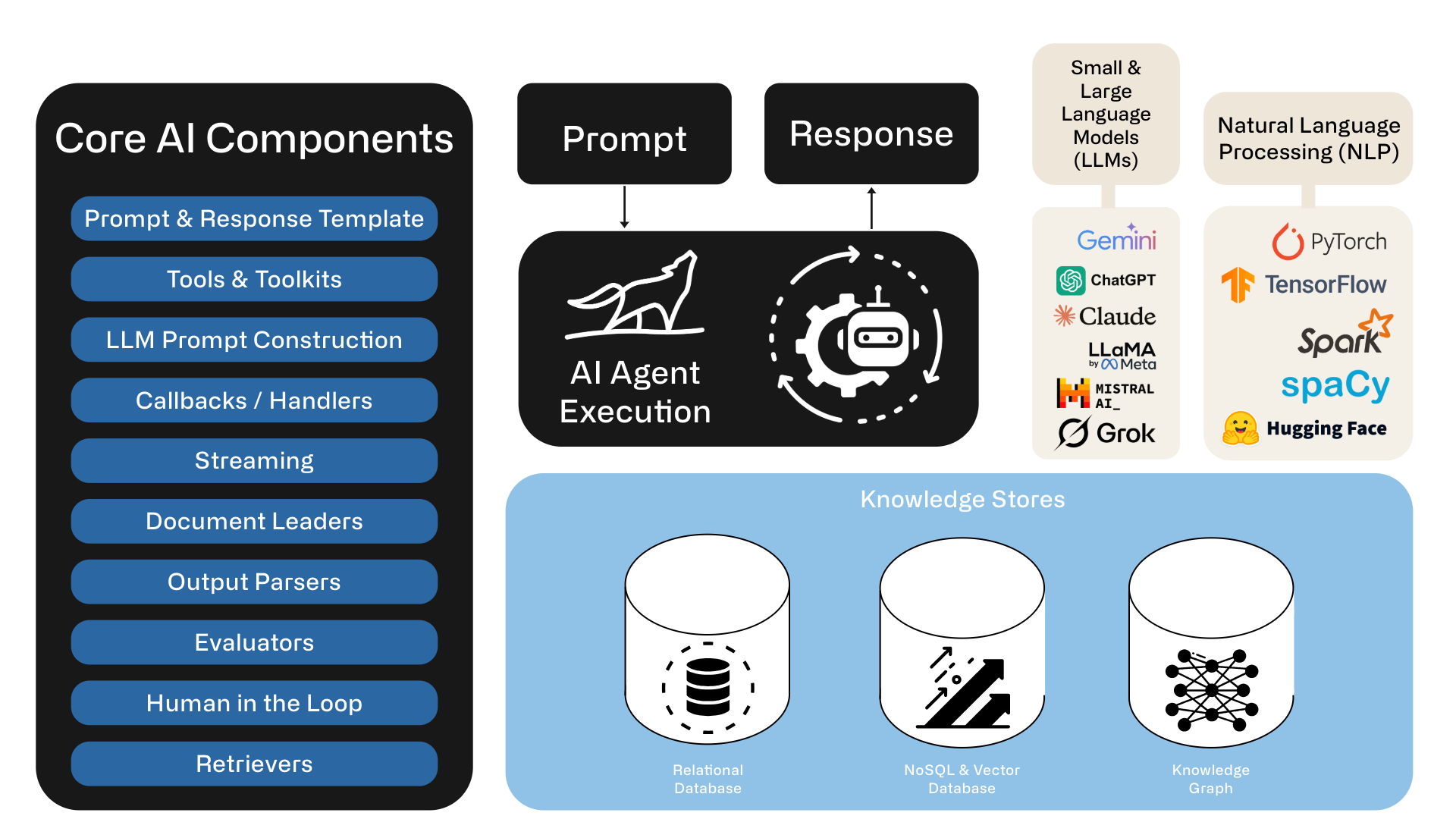

This decision is hardly trivial, and just because its AI doesn’t mean we can skip the best practices in architecture, software engineering, quality assurance, and monitoring. Implementing a robust AI-driven solution involves navigating through layers of complexities—ranging from agentic workflows, large language models (LLMs), knowledge graphs, natural language processing (NLP), data orchestration, and meticulous integration processes. Each comes with its own set of challenges, resources, time and costs.

Complexities of Building Your Own Production Agentic AI Workflow Solution

While building a fully customized AI solution can appear enticing—offering greater control, flexibility, and potential for tailored enhancements—it is notoriously complicated to scale beyond a pilot. Consider several critical factors that organizations often underestimate:

1. Architecture & Data Engineering

- Data Orchestration and Machine Learning Challenges: Building requires complex data and machine learning orchestration involving scraping, ingestion, extraction, normalization, matching, and maintaining data relevance. Life sciences data exist in highly heterogeneous records, country-specific data schemas, multi-lingual nuances, and more. Ensuring consistency and accuracy in data extraction and mapping is an immense and resource-intensive undertaking.

- Document & Knowledge Base Pre-processing: Ingesting data from various sources—such as SharePoint, Google Drive, or website can be far more complicated than it first appears. Different platforms may use unique authentication mechanisms, file structures, and access controls. Ensuring each source is parsed and standardized consistently takes careful planning and robust tooling. Metadata like version history or permissions often needs to be preserved for accuracy and compliance. If you skip thorough preprocessing, downstream processes (like text retrieval or indexing) may fail unexpectedly.

- Document Formats & PDF Issues: PDFs pose a unique challenge because they can contain anything from embedded fonts to scanned images, making extraction of clean text non-trivial. Other file types, like XLSX or DOCX, each come with their own peculiarities and parsing libraries. Even seemingly standard PDFs may have layout quirks that break text continuity or scramble logical paragraphs. Handling these inconsistencies often requires specialized conversion tools and a fair amount of manual intervention. Without a solid strategy to handle varied file formats, your RAG system risks being filled with fragmented or inaccurate text.

Without regularly updated, well organized and structured data, and the right context, most scalable AI systems will be slow, expensive, and not produce the expected outputs. It’s the garbage in garbage out problem - or in modern terms I call it feeding a Data Swamp (data/documents/media) to the AI Agent, and expecting it to figure it out, leading to creative fabricated responses !

2. Model Customization & Prompt Engineering

- Prompt Engineering and Domain Specificity: AI agents trained in life sciences require domain-specific embedding, fine-tuning, and rigorous prompt engineering for accuracy. An “off-the-shelf” generic LLM won’t deliver precision healthcare content or accurately understand nuanced domain criteria without extensive tuning and validation.

- Model Pipelines: A robust model pipeline integrates comprehensive version control to systematically track changes, updates, and iterations throughout the development process. This approach enables efficient rollbacks to stable versions when new iterations underperform or introduce issues, ensuring minimal downtime and consistent performance.

If your AI model is truly in a production environment, and making you money, saving time or helping customers, it can also go rogue, wild or wrong and do the opposite - how to quickly can you revert it to its best working state?

3. Quality Assurance (QA) & Monitoring

- Stochastic Nature and Extraction Variability: LLMs exhibit inherent stochasticity; identical prompts may yield variable outputs. This variability makes consistency difficult to achieve, especially in regulated industries like Life Sciences, where uniformity and predictability are paramount. DIY solutions thus necessitate intricate preprocessing and post-processing validation steps, including explicit frameworks and guardrails for continuous quality management.

- Accuracy Problems in Production: During testing, your model might work on a limited set of well-curated data and predictable queries, creating a false sense of security. However, in real-world usage, users can ask unanticipated questions or supply ambiguous input that the system hasn’t been trained or tuned for. This can result in incorrect or incomplete answers at critical times, damaging trust and adoption. Monitoring and continual validation become essential once the system is live. You may need to introduce feedback loops and additional fine-tuning based on actual user interactions to maintain high accuracy.

- Hallucinations: Large Language Models can generate coherent yet entirely fabricated information, known as “hallucinations.” These outputs often appear authoritative, making them dangerous for decision-making if users don’t double-check the facts. Minimizing hallucinations requires careful prompt engineering, model configuration, or the addition of retrieval-based evidence to back up answers. Even then, you need robust post-processing checks to filter out spurious content. Ultimately, effective oversight—human or algorithmic—is essential for mitigating the risk of misleading answers.

- Response Quality Assurance: Evaluating the quality of AI-generated text isn’t as straightforward as running unit tests or verifying numeric outputs. You need to assess factors like relevance, factual correctness, clarity, and style—many of which are inherently subjective. Automated metrics (e.g., BLEU, ROUGE) often don’t reflect real-world user satisfaction or correctness. Consequently, many organizations introduce human-in-the-loop review to catch errors and gather feedback. However, scaling this human QA process can be labor-intensive and expensive, especially for large deployments.

- Tip: create your own curated dataset for automated testing and measuring

4. Software Engineering & Integration

- Integration with Existing Systems: Your RAG or Agentic AI solutions rarely operate in isolation; they often need to integrate with databases, CRMs, ticketing systems, or other enterprise applications. Each system has its own data schema, APIs, authentication processes, and performance constraints that must be respected. A mismatch or lack of synchronization in these integrations can lead to erroneous data, delays, or even security vulnerabilities. Moreover, once users rely on automated answers drawn from external systems, they expect data to be up-to-date. Orchestration tools and well-documented APIs become vital to avoid integration chaos.

- Software Engineering: By embracing a holistic framework that integrates DevOps, DataOps, MLOps, and AIOps, and by adhering to best software engineering practices, design patterns, and principles, organizations can streamline data pipelines, automate deployments, and achieve scalable, secure, and efficient systems across the entire development, deployment, and operational lifecycle.

5. Compliance, Security & Governance

- Compliance & Audit Requirements: Regulatory standards like GDPR, HIPAA, ISO, or SOC-2 mandate strict controls over how data is accessed, processed, and stored. When dealing with text ingestion and generation, you must track the flow of potentially sensitive information and maintain audit logs. Failure to do so can result in non-compliance penalties, legal troubles, and reputational damage. Additionally, meeting these standards often requires specialized security reviews, documentation, and secure cloud deployments. Balancing agility and innovation with strict regulatory requirements can significantly slow development cycles.

- Security & Data Leakages: Ingesting private, proprietary, or personal data into AI systems raises red flags for security-minded stakeholders. An improperly configured system might inadvertently expose confidential information in generated responses, store that data in logs accessible to unauthorized personnel or share the data in public GenAI APIs. Use of private models, encryption at rest, encryption in transit, robust access controls, and careful logging policies become table stakes. You also have to consider potential vulnerabilities in any third-party integrations or APIs. If you don’t build with security in mind from the outset, you risk severe breaches that can undermine the entire project.

Tip: Embed security ground up and involve the right stakeholders early

Leveraging Existing Agentic Workflow Solutions

In contrast, buying and implementing an existing specialized life sciences, medtech, pharmaceutical, or biotech agentic AI solution mitigates these complexities significantly. Specialized solutions come ready-equipped with agentic workflows, prebuilt reflection patterns, domain-specific embeddings, and optimized integration modules. Pre-tested and penetration-certified, they ensure security and compliance out-of-the-box, with proven consistency in performance and minimized variability.

Immediate Usability and Integration

Prebuilt solutions are typically designed to seamlessly integrate into existing ERP, CRM, and CPQ workflows, significantly reducing implementation friction. Users benefit from predefined schemas, consistent data extraction, and multi-language support, allowing fast deployment and immediate usability without additional manual overhead.

Scalability and Cost Efficiency

At scale, ready-to-use solutions reduce the total cost of ownership through optimized infrastructure, centralized updates, and vendor-provided support. Organizations can immediately benefit from consistent, validated, and standardized analytics without the resource drain of continuous maintenance and internal troubleshooting.

Real-world AI Use Cases from working with the top ten medtech firms

Consider scenarios such as capturing highly detailed award criteria from diverse documentation, accurately extracting nuanced healthcare data, performing country-specific analytics across varying languages, and normalizing the data values and quantities. At a small scale you can resolve some of this with prompt engineering and processing the documents manually. In an enterprise grade solution you may have thousands of opportunities with multi-language scanned documents that need to be processed daily. Such tasks involve highly sophisticated AI orchestrations—tasks that are painstakingly complicated to accurately replicate internally but efficiently addressed by specialized vendor solutions that have already done extensive groundwork and have production-grade core AI components.

Additionally, vendors providing specialized Life Sciences AI solutions have performed extensive penetration testing, satisfying rigorous security standards required for sensitive data handling and regulatory compliance. Attempting such certification independently is both resource-intensive and risk-prone.

Buy vs. Build: Strategic Considerations

To build or to buy? Answering this pivotal question revolves around several key strategic considerations:

- Time-to-Value: DIY solutions are slow to develop, while buying a prebuilt agentic workflow solution provides quicker ROI and value realization.

- Complexity and Risk: Building involves substantial complexity, internal expertise, and continuous risk mitigation, while buying mitigates these significantly.

- Domain Specialization: Existing solutions are already extensively tuned and validated against the nuances of pharma/biotech/medech-specific criteria and regulations.

- Scalability and Maintenance: At large scale, prebuilt solutions offer significantly greater operational efficiency, lowering overhead, and ongoing maintenance burdens.

Conclusion: Thoughtful Decisions, Strategic Impact

While the allure of bespoke AI solutions is understandable, the significant technical, domain-specific, regulatory, and operational complexities associated with building your own solution in Life Sciences cannot be overstated. Specialized AI agentic workflow platforms offer solid integration, robust security and compliance, proven domain specificity, and optimal scalability.

Organizations keen on strategic advantage and swift ROI realization will likely find that choosing to buy an existing specialized Life Sciences AI solution significantly reduces complexity and cost, ensuring robust and immediate operational enhancement. Building your own AI solution, though attractive in theory, presents substantial and nuanced practical hurdles that few organizations are fully prepared to overcome efficiently.

In navigating AI in Life Sciences, recognizing when to leverage existing specialist AI partners such as Vamstar (I’m the CTO and co-founder) can be your greatest strategic advantage, rapid deployment and return on investment.